GPT-5-Codex Explained: Why OpenAI’s New “Enduring” Coding Agent Matters

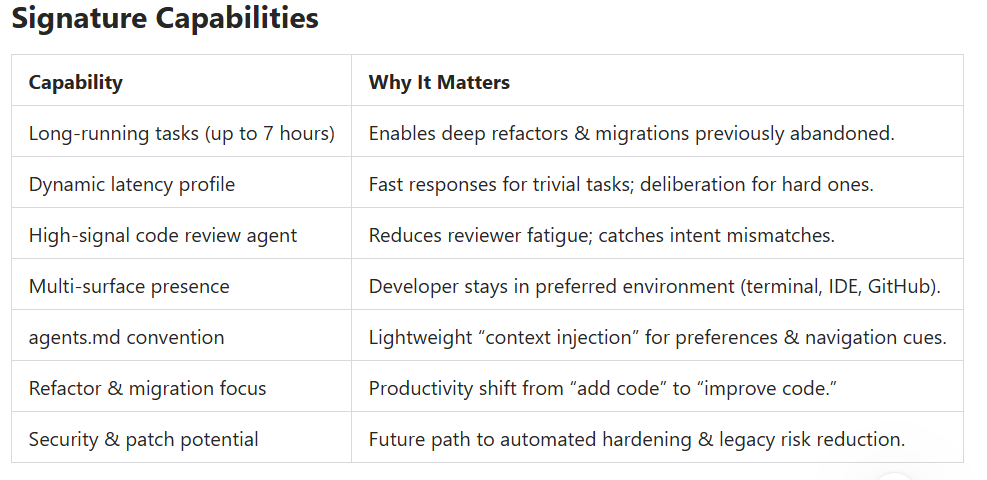

GPT-5-Codex introduces long-running coding agents, seven-hour refactors, smarter code review, and a new blueprint for autonomous software engineering.

Key Takeaways:

- Dynamic “thinking window”: tasks can run seconds to 7 hours.

- 74.5% SWE-bench score (near GPT‑5 thinking variant on 477 subset).

- Persistence + “grit” for multi-hour refactors = new differentiator.

- Multi-surface access: CLI, Codex Cloud (ex ChatGPT Codex), IDE extension, GitHub review bot.

- Harness concept: tooling + runtime loops are as critical as the model.

- Code review agent crosses the usefulness threshold (high signal, low noise).

- agents.md emerges as a lightweight preference + navigation guide.

- Shift from raw generation to migration, refactor, security patching, large-scale codebase stewardship.

- Future: multi-agent swarms, scalable oversight, compute scarcity as a strategic constraint.

On September 16, OpenAI officially launched a new model, GPT-5-Codex—a fine‑tuned variant of GPT‑5 specifically designed for its various AI‑assisted programming tools. The company says the new model’s “thinking” time is more dynamic than earlier versions: the time it takes to complete a coding task can range from a few seconds to seven hours.

As a result, it performs better on agent coding benchmark tests. The release of GPT‑5-Codex effectively brings to a close what may have been the most dramatic sentiment swing lately in the “coding agents” space. For more than a year—starting from Claude 3.5 Sonnet last June, then 3.7 Sonnet and Claude Code in February, and Claude 4 in May—Anthropic had been far ahead in coding scenarios, holding a dominant position. During this period the company’s revenue skyrocketed to 5 billion USD (10% from Claude Code), and its market cap surged to 183 billion USD—an increase of 122 billion. All this clearly reignited OpenAI’s fighting spirit.

Keep in mind, as early as 2021 OpenAI released the original Codex, which gave rise to GitHub Copilot—the world’s first AI programming tool (to which 182 developers are still actively contributing today). GPT‑3 also inspired Debuild, foreshadowing the later “vibe coding” startup wave. Since then OpenAI has re‑prioritized coding capabilities in o1 and GPT‑4.1. GPT‑5-Codex scores 74.5% on SWE-bench, almost matching GPT‑5 (thinking) at 74.9% on the 477 subset.

So what has driven the sharp turnaround in overall perception of GPT‑5? One reason: the Codex team is “working extremely hard.”

First: a “multi-faceted unified” agent.

Greg Brockman mentioned in a podcast today: “At the start of the year we set a company goal: build an agent-like software engineer by year’s end. Figuring out what that really means, how to implement it, and how to integrate all the opportunities and compute—this has been a huge task shared by many people at OpenAI.” The initial agent-style SWE shell was called 10X and ran in the terminal. Now, with the new Codex CLI, “ChatGPT Codex” (now renamed Codex Cloud), IDE extensions (surpassing 800K installs in 2.5 weeks), and a GitHub code review bot, OpenAI has formed a complete set of interaction surfaces covering diverse needs.

Second: better post-training characteristics.

OpenAI has always emphasized tight integration of research and product. The podcast also mentioned several important traits, the most significant being major improvements in “long-running agent tasks.” Thibault Sottiaux said: “This model shows a capability: it can persist longer, with the ‘grit’ needed for complex refactoring tasks.

But at the same time, for simple tasks it responds very fast—it doesn’t overthink before giving an answer. That makes it a great collaborator—you can ask questions, locate code, plan a solution; and once you let it go, it can work continuously for a long stretch. We’ve seen it internally work seven hours straight to finish a complex refactor—something we’d never seen before. We’ve also invested heavily in code quality; it’s optimized for the real needs of Codex users.” This cleverly leveraged “grit” is the key that makes GPT‑5-Codex a more comprehensive, practical agent-style programming model. It’s not just optimized for the hardest problems while forcing users to switch to a “dumber” model for easier tasks.

What Is GPT-5-Codex?

GPT-5-Codex is a fine‑tuned variant of GPT‑5 built specifically for AI-assisted software engineering. Unlike earlier “autocomplete-first” paradigms, it acts more like an **enduring agent**: planning, iterating, executing tool loops, and persisting across long sessions without constant human prompting.

---

Why It’s a Turning Point

Earlier waves of coding AI emphasized:

1. Token-by-token completion.

2. Chat-based code Q&A.

3. Narrow refactors.

GPT-5-Codex reframes value around:

- Endurance: Multi-hour refactors that stick to a plan.

- Tool Integration: Runtime harnesses, sandboxed execution, IDE context, and remote “notebooks.”

- Reliability over Flash: Consistency in multi-step edits rather than one-off clever snippets.

- Workflow Coverage: From suggestion → modification → review → migration.

---

The “Harness” Advantage

The “harness” = orchestration layer: tools, file system access (scoped), agent loop, planning memory, terminal/IDE integration. Insight: Interface + runtime = half the product. A smarter model without the right harness feels slow or brittle; a well-instrumented harness elevates usable intelligence.

---

From Autocomplete to Autonomous Assistance

Old paradigm: “Type + accept ghost text.”

New paradigm: “Describe intent → agent plans → iterates until tests pass → surfaces diffs.”

This shifts developer energy toward architecture, constraints, and reviewing intent alignment—reducing mechanical typing and rote syntax recall.

---

Code Review: Crossing the Trust Threshold

Historically, automated review = noise.

GPT-5-Codex’s review agent:

- Understands declared design intent (e.g., in PR description).

- Traverses dependencies and contract layers.

- Surfaces non-obvious edge cases.

- Is “missed” when absent—signal it’s past the usefulness tipping point.

Implication: Review throughput scales without proportional human burnout.

Memory & Context: Present Limits

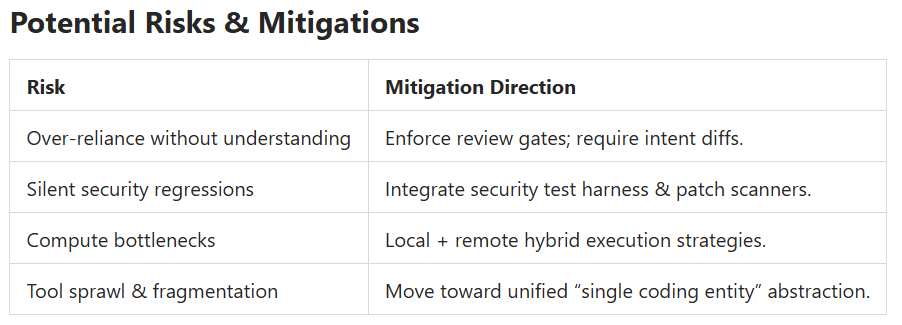

Current limitation: weak persistent memory across separate runs.

Workaround: agents.md + structured repo docs (readme, architecture notes).

Future research vectors: structured embeddings, durable episodic traces, supervised preference accumulation.

---

Strategic Industry Shift

Anthropic’s earlier momentum (Claude family + Claude Code) pushed competitive urgency.

GPT-5-Codex signals:

- Market maturing beyond benchmark one-upmanship.

- New battleground: *endurance, reliability, multi-agent orchestration, and developer ergonomics.*

- Coming differentiator: **scalable oversight** (human + weaker AI supervising stronger autonomous chains).

---

Migration, Legacy & Risk Reduction

High-value frontier:

- Large-scale library/API migrations.

- Legacy rewrites (e.g., COBOL → modern stacks).

- Security patch waves & dependency hygiene.

- Formal verification assistance (long-term defense posture).

Result: lowering “change cost” can unlock exponential modernization cycles.

---

Constraints: Compute as the New Scarcity

Vision: millions → billions of agents; potential future where “every developer (or org) has dedicated GPUs.”

Two imperatives:

1. Improve intelligence per unit compute (model efficiency).

2. Expand physical compute supply chain.

Strategic takeaway: **Optimize harness + agent design for latency & resource locality early.**

---

What This Means for Developers

- Learn to **direct agents** (intent clarity, constraint definition) as a core skill.

- Invest in architecture literacy; AI amplifies good structure, compounds bad structure.

- Treat code review AI as a collaborator—capture rationale, not just diffs.

- Adopt repository conventions (agents.md, docs) to frontload agent context.

FAQs:

What is GPT-5-Codex?

A GPT‑5 fine-tune optimized for autonomous, long-running software engineering workflows (refactoring, review, migration).

How is it different from earlier coding models?

Persistence, multi-environment harness integration, higher reliability in multi-step tasks, and a strong code review mode.

Does it replace developers?

No; it shifts human focus toward architecture, intent specification, and higher-level decision-making.

What is agents.md?

A convention file that encodes preferences + navigation/compression hints for the agent to accelerate onboarding to a codebase.

Why do long-running agents matter?

Complex refactors and migrations require sustained context, iterative validation, and resilience—previously impractical with short single-shot completions.

How should teams prepare?

Improve documentation, define coding standards, add comprehensive tests, and pilot code review agents in non-critical repos first.