Why AI models hallucinate and ways to prevent it

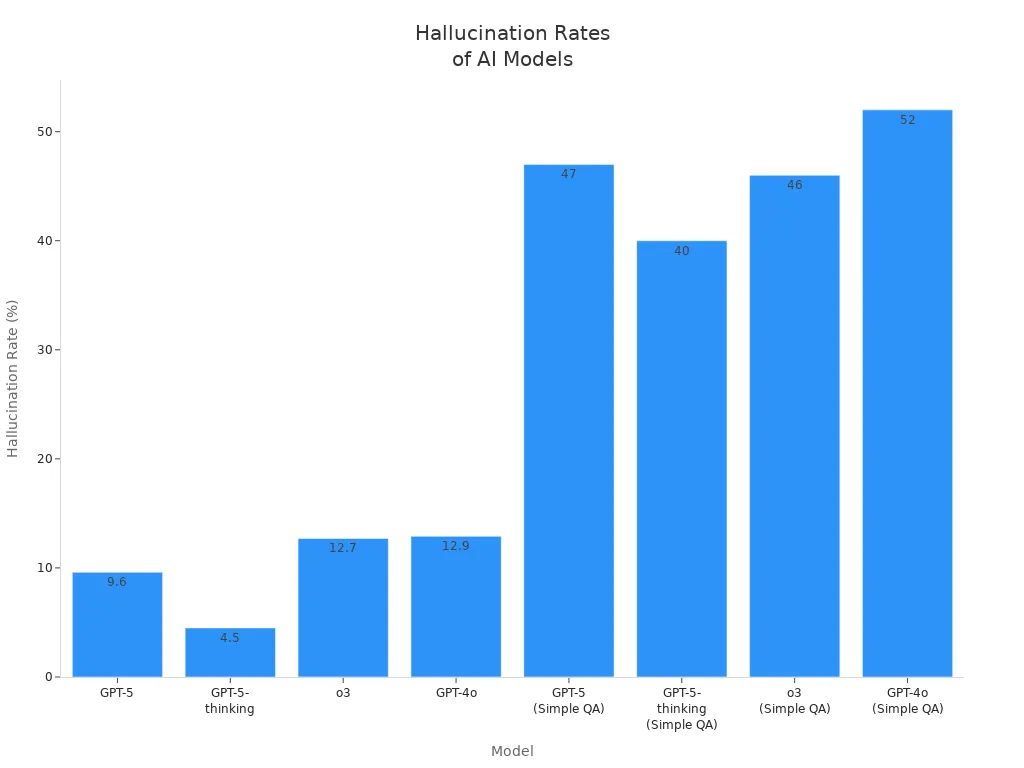

You might see that ai sometimes gives answers that seem correct but are actually wrong. Experts call this ai hallucination. Hallucinations in large language models happen when ai makes up information or gives facts that are not true. For example, a chatbot might make up details about a science study or give fake legal citations. Studies show that different ai models have different hallucination rates, as shown below:

AI Model | Hallucination Rate |

|---|---|

o3 | 33% |

o4-mini | 48% |

o1 | 16% |

Knowing why ai does this helps you use these tools better.

Key Takeaways

AI hallucination happens when AI gives answers that seem right but are wrong. Always check important facts with trusted sources.

Hallucinations can cause big problems in healthcare, law, and finance. Trusting AI too much can lead to bad choices.

Using good training data is very important. Make sure the data is correct, full, and current to stop AI from making up facts.

Using strong evaluation metrics helps find when AI is wrong. Giving AI rewards for saying "I don't know" can make it more accurate.

Developers should often check what AI says and update its training data. This helps people trust AI and keeps it working well.

AI Hallucination Explained

Definition and Impact

When you ask ai something, you want a true answer. Sometimes, ai gives answers that sound real but are made up. This is called ai hallucination. Experts have looked at this problem and given different meanings. Here are some of them in the table below:

Source | Definition |

|---|---|

Times when an AI chatbot makes up fake or wrong information when you ask it something. | |

OpenAI (May 2023) | Making up facts when it does not know the answer. |

OpenAI (May 2023) | Mistakes in thinking by the model. |

CNBC (May 2023) | Making up things but acting like they are true. |

The Verge (Feb 2023) | Creating information that is not real. |

Cloudflare | Wrong or false answers from generative AI models, hidden in content that looks correct. |

You may ask why ai hallucination is important. If ai gives wrong answers, it can cause trouble. For example:

In healthcare, ai hallucination can trick doctors and lead to unsafe care.

In legal work, hallucinations in large language models can make up fake cases and cause expensive problems.

In finance, ai can give false market guesses and cause big money losses.

If you trust ai too much, you might make choices that hurt your health or business. Hallucinations in large language models can also make people lose trust in ai and hurt a company’s name.

Hallucinations in Large Language Models

Large language models learn from lots of data to answer questions. These models guess what word comes next in a sentence. Sometimes, they do not have enough facts or clear details. When this happens, ai can give answers that look right but are not true. This is why hallucinations in large language models happen a lot.

You see ai hallucination most when you ask about rare facts or new things. Hallucinations in large language models can show up in many ways:

Making up quotes or numbers

Creating fake news stories

Giving wrong steps or instructions

Tip: Always check important information from ai, especially for work or school.

Hallucinations in large language models are a problem for everyone who uses ai. If you know what causes ai hallucination, you can use these tools more safely and smartly.

Causes of Misinformation

Next-Word Prediction

You might wonder why language models sometimes give wrong answers. The main reason is how ai works. Ai guesses the next word in a sentence. It uses patterns from huge sets of data. If the data has mistakes, ai can copy those mistakes.

Ai often repeats what it learns, even if it is wrong.

Because of next-word prediction, ai can make text that sounds right but is false.

If you ask ai about rare or new things, it may not know enough. This can cause ai hallucination. The answer may look correct but is not true. Fact-checking is important to catch these mistakes and keep your information safe.

Low-Frequency Facts

Ai has trouble with facts that do not show up often. These facts are not common in the training data. If ai does not have enough good data, it cannot learn these facts well. When you ask about something rare, ai may hallucinate and give wrong answers.

This happens when ai tries to answer questions about rare events, small towns, or new discoveries. Ai answers are not as good or safe when it cannot find enough examples.

Evaluation Incentives

How ai gets checked also affects hallucination. Today, ai gets rewarded for giving answers, even if it is not sure. This is like guessing on a test when you do not know the answer. Ai learns to guess instead of saying, "I don't know."

Studies show these rewards make ai hallucinate more. If you want better answers, you need systems that reward honesty and punish wrong guesses. This helps ai avoid spreading false information and lowers the risk of mistakes.

Note: New studies show ai misinformation can spread fast, even from small sources. It may seem fun and positive, but it is less believable and less harmful than regular misinformation.

Characteristic | Description |

|---|---|

Focus | More often about fun content and usually sounds more positive than regular misinformation. |

Origin | More likely to come from smaller user accounts. |

Virality | Much more likely to spread quickly even from small accounts. |

Believability | A little less believable and harmful than regular misinformation. |

Knowing these causes helps you use ai safely and get better results.

Reducing AI Hallucination

High-Quality Training Data

You can help stop ai hallucination by using better training data. If the data is correct and new, ai will not make up facts. Bad data can make ai give wrong answers. The table below shows how different problems with data can change ai’s answers:

Issue Type | Description |

|---|---|

Inaccurate Data | The model learns from data that contains errors. |

Incomplete Data | Missing information forces the model to fill gaps on its own. |

Outdated Data | Data that no longer reflects the current state of affairs can lead to irrelevant responses. |

Biased Data | Training data with bias skews the model’s understanding, resulting in biased outputs. |

Irrelevant Data | Including data that does not pertain to the target context confuses the model. |

Misleading Data | Data that is misleading can drive the model toward incorrect conclusions. |

Duplicated Data | Repetition in the training set can overemphasize certain facts. |

Poorly Structured Data | Disorganized data makes it difficult for the model to learn clear patterns. |

You can make data better by checking facts and asking experts. You should also remove old or wrong information. Keeping data fresh helps ai give better answers. These steps make ai less likely to make up things.

Tip: Always use fact-checking and let people check facts to find mistakes early.

Improved Evaluation Metrics

You can lower hallucination by using good ways to check ai answers. Good metrics help you see when ai gives answers that are not true. The table below lists some strong metrics:

Metric | Description |

|---|---|

Perplexity | Shows how well the model’s predictions match real results. High values may mean more hallucinations. |

Semantic Coherence | Checks if the answer makes sense and stays on topic. |

Semantic Similarity | Measures how closely the answer matches the question’s theme. |

Answer/Context Relevance | Looks at whether the answer fits the question and context. |

Reference Corpus Comparison | Compares the answer to trusted sources to find errors. |

You should reward ai for saying “I don’t know” and take away points for wrong answers given with confidence. This helps stop ai from spreading false facts and makes it safer.

Evaluation Strategy | Impact on Hallucinations |

|---|---|

Comprehensive Metrics | Measure factuality, coherence, helpfulness, and calibration. |

Reduces the chance of wrong answers given with high confidence. | |

Reward Uncertainty Awareness | Encourages ai to show doubt or ask for more details. |

Note: Using these metrics helps ai avoid making up facts and keeps everyone safer.

Model Abstention Strategies

You can help ai stop guessing by teaching it to say “I don’t know.” Model abstention strategies let ai admit when it is unsure. This stops ai from making up answers and keeps people safe.

Model abstention strategies help ai know when it does not have enough information.

These strategies teach ai to show doubt instead of guessing.

Some techniques are fine-tuning, instruction tuning, and learning from human feedback.

In real life, these strategies help keep people safe. For example, in healthcare, ai that says “I am not sure” helps doctors make better choices. This lowers the risk of hallucination.

Callout: Teaching ai to admit doubt is important for stopping ai hallucinations and false facts.

How EasySite AI Uses Mix AI Models

EasySite AI uses Mix AI Models to lower hallucination and give better answers. These models use many smart methods:

Human-in-the-loop testing: Experts check ai answers for truth and meaning.

Fact-checking mechanisms: AI agents check answers with trusted sources.

Multi-agent verification: Different ai agents make answers and compare them.

Uncertainty awareness: The system shows how sure it is about each answer.

Automated fact verification: AI checks facts with outside data to make sure they are right.

These methods help EasySite AI give more true answers and lower hallucination. These steps are important for stopping ai hallucinations and false facts.

Practical Steps for Developers

If you want to stop false facts and make ai safer, do these things:

Train models with many types of good data.

Use retrieval-augmented generation to connect ai to trusted sources.

Check and update data often to keep it correct.

Watch ai answers and check facts before sharing.

Reward ai for showing doubt and punish confident mistakes.

Use people to check important answers.

Doing these things helps stop ai from spreading false facts and keeps users safe.

You now know that hallucinations in large language models happen because of how you train and check ai, not from random errors. You can lower these mistakes by using high-quality training data and strong evaluation metrics. Mix AI Models and new methods, like retrieval-augmented generation and chain-of-thought reasoning, help you get more reliable ai answers. When you use ai with layered defenses and regular checks, you build trust and improve accuracy.

Bigger and newer ai models need many layers of protection.

You should always watch and change ai systems as needed.

Checking ai references is key to keeping things safe.

Strategy | Description |

|---|---|

Retrieval-augmented generation | Uses trusted, up-to-date data to make ai more correct. |

Chain-of-thought reasoning | Lets ai show its thinking, so answers are easier to understand. |

Ongoing monitoring | Checks ai answers often to find and fix mistakes. |

Adaptive learning | Helps ai get better by learning from new data and feedback. |

If you use these steps, you help make ai safer and easier to trust for everyone.

FAQ

What is AI hallucination in simple terms?

AI hallucination happens when an AI gives you an answer that sounds real but is actually made up or false. You might see this when a chatbot invents facts or gives wrong information.

How can you spot AI hallucination?

You can spot AI hallucination by checking facts from trusted sources. If an answer seems strange or too perfect, look it up. Always double-check important details, especially for school or work.

Why does AI sometimes make up facts?

AI learns from lots of data. If it does not find the right answer, it might guess or fill in gaps. This guessing can lead to made-up facts or errors.

What steps help reduce AI hallucination?

Tip: Use high-quality data, check answers often, and reward AI for showing doubt.

You can also use tools like retrieval-augmented generation and human review to catch mistakes early.

See Also

Creating AI-Powered Wireframes: A Guide for 2025

Crafting an Endearing AI Tool: Tips for 2025

Is It Possible for AI to Create HTML?